As Wacom continues to iterate on products that are built for creative filmmaking workflows, we’re always looking to learn from those that are pushing the boundaries in their industry. Learn from animator and filmmaker HaZ Dulull in the interview below to see how he bridged tools like Unreal Engine and DaVinci Resolve while using a Wacom Cintiq Pro to create his animated sci-fi feature film, RIFT.

How did the idea for RIFT come about?

The idea for RIFT actually was based on a story idea I created back in 2019 called “Brother” and we then developed it further into an early version of RIFT – which was going to be fully live action. We then shelved it as we focused on other projects, mainly because it was going to be very expensive to shoot (we’re talking about physics defying action sequences and multiverses!)

But then when the pandemic hit, we had to pause our other live action project. To stay relevant in an industry where content is king, we pivoted towards animation to continue being busy with production and creating content (feature film). In that process, myself and my producing partner Paula Crickard revisited the project, and we realized that animation is the perfect medium to do the ambitious ideas we had in the script we had developed with writer Stavros Pamballis.

Then in October 2020 myself and CG Supervisor – Andrea Tedeschi, decided to do a test sequence for the project, shared it with a few industry people including Epic Games, and from there it was clear we could do this and there was an appetite for adult animation.

What creative boundaries were you looking to push with the production?

There were several boundaries we were pushing mainly from a production an disruptor side of things, such as producing an entire 93 minute feature film completely remotely, entirely in Unreal Engine and with a small team…oh, and also the entire thing is FINAL PIXELS, meaning we don’t do the conventional CG thing, where you render out passes, etc., and then composite them together and apply comp FX before getting a final shot.

But in our case it was “what you see is what you get” – straight out of the Unreal Engine viewport as EXR frames. This meant we can work in an agile way, and keep iterating. Some shots had so many iterations because of this final pixels approach.

What existing production challenges did you look to improve when creating RIFT?

Final pixels is something that you see in video games or interactive content, but what we were doing is taking real-time workflow and then rendering these out as frames for linear film content. So things like particles or physics, which are usually realtime things, can be unpredictable when rendering out as a linear approach (film). So we had to really push Unreal Engines Sequencer to make things work when rendering out as EXRs.

The other production challenge, which was probably the biggest one, is the overall look and style of the movie. We didn’t want it to look like a video game cinematic. We wanted this to have its own unique look that stood on its own. I was particularly drawn to anime movies like Akira, Ghost in the Shell, Cybercity Oedo, and more, when I was in my late teens, so I wanted to evoke that kind of energy and when creating a hybrid cel shaded and CG look to RIFT.

At the time of production (Note: RIFT is created entirely in Unreal Engine 4.26.2) there wasn’t much cel shader support in Unreal, apart from some post processing effects. These didn’t really work for us, because we noticed when the camera moved around or specific lighting were done, the edge lines and reflections didn’t look very good and it looked very “filtered”.

So we spent a lot of time developing our own set of shaders which also meant we had to think about the way we designed and built out characters (using Reallusion Character creator), in order to achieve the look I wanted.

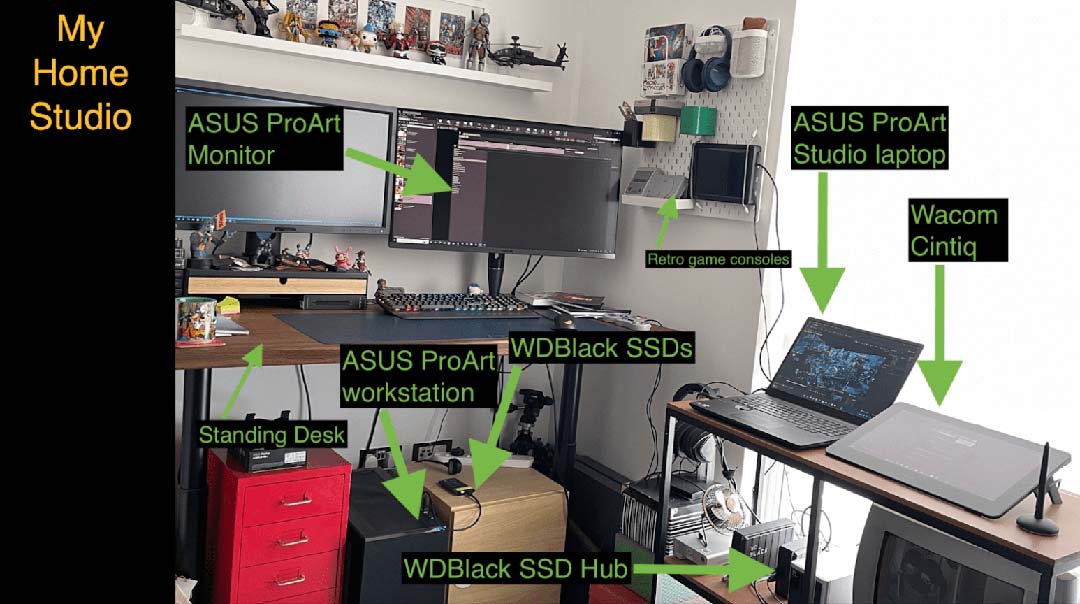

What was your tech setup alongside the Cintiq Pro? What software and hardware were key in creating RIFT?

We were very fortunate to have got some really great hardware tech-sponsorship support, which really helped massively. ASUS came on board very early on with their ProART Workstation and ProArt PA32UCG 4K HDR monitor, fitted with an NVIDIA RTX A6000 card. The entire movie was actually rendered out on that single machine and card.

Western Digital then came on with providing WDBLACK SSD hard drives to allow me to work fast with the unreal engine cache data but also the 4K EXRS rendered to those drives as Reels (we have 8 reels in total the film was split into).

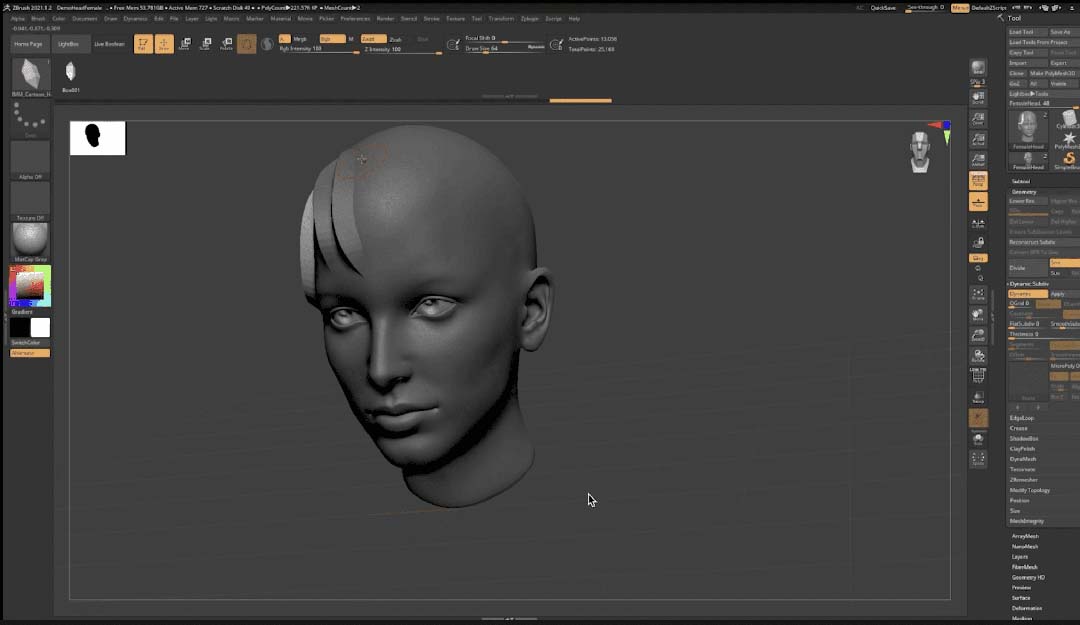

But whilst working on the main shots, I wanted to be able to work on editorial at the same time, and later in production as my machine was rendering out the queue/ batch of shots. I was editing on the ASUS StudioBook 16 OLED laptop connected with my Wacom Cintiq Pro 16. My Head of CG, Andrea Tedeschi, also used his Wacom Cintiq Pro to sculpt hair geometry for our characters using ZBrush.

How integral was the Wacom Cintiq Pro to your creative process?

The Cintiq Pro came in mid-way of production of the film (I was using a Wacom Bamboo before) and I was blown away by the large size screen and work area, I decided to use it with Davinci Resolve as well as Unreal Engine to explore ways we can be creative with it on RIFT, as well as our other projects.

As a production company who produces original IP and not service other IP, we always have to be exploring new ways to create content as well as ways to make our productions more efficient.

When working in Unreal Engine, I spent 90% of my time inside Sequencer – which is essentially the timeline of scene/shot. Editorial is a key component in my storytelling process, even in pre-production I’m editing previs to craft the story as early on.

In fact, instead of me telling you my creative process, let me show you!

Watch the video below showing how HaZ works directly in Unreal Engine Sequencer with his Wacom Cintiq Pro to have a much more immersive experience with his filmmaking process:

How did you utilize Touch OSC/Unreal Engine/Cintiq Pro together? How did you discover these capabilities?

I was exploring other production opportunities with the use of Touch OSC and Unreal Engine, that no one seems to be pushing when it comes to Touch OSC in Unreal Engine editor (not realtime games, but for actual cinematic creations), so we took it onto ourselves to explore and develop that on our end.

Andrea and Ernesto Arguello figured out how to create some basic Touch OSC tools in Unreal to give me the ability as a director to choreograph specific action like lighting tweaks and explosions.

Watch video showing the tools HaZimation developed early on utilizing Touch OSC with Unreal Engine and Wacom Cintiq Pro:

We’re big fans of OTOY Sculptron at Wacom. Can you describe how you used Sculptron in your workflow? What potential do you see with this software?

Although we didn’t use it in the main shots of the film, we did explore it for a few shots in RIFT, and what we found super useful was the fact you can sculpt your facial expressions and you then export them out as blend shapes. It felt so liberating and the results had this kind of organic feel to it.

Watch video showing HaZ and his team experimenting with Sculptron in RIFT:

What’s next for you?

We are currently screening RIFT at various festivals – we just had RIFT screen at the Oscar accredited SPARKS Festival in Vancouver, and a few days ago we had the UK premiere at the BAFTA qualifying British Urban Film Festival.

Project-wise, we are in production on two of our video game IP’s, one of them being the Spin off game for RIFT and the other is SYNCROMANIA (both for Xbox console), which will be announced early next year.

We are also producing a huge metaverse project called XLANTIS, which involves openworld gameplay, whilst also prepping for our next animated feature film project to start next year.

All of those projects are produced using out Unreal Engine pipeline and of course Wacom Cintiq Pro being used in all areas of the the productions.

Want to keep up with HaZ?

- Check out his website

- Follow HaZ on Instagram, Twitter, or Facebook